Smarter Machines

Mechanical Engineering Professor Matei Ciocarlie builds robots with intelligent minds—and bodies

Matei Ciocarlie

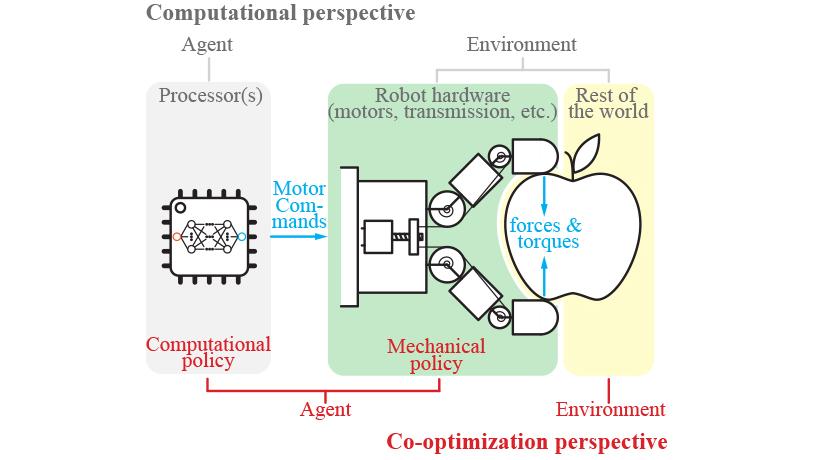

A schematic illustrating Ciocarlie's co-optimization approach

A series of conversations on pioneering research.

Since the early 1960s, when robots first hit the factory floor, automation has increasingly become a fact of daily life. But for almost seven decades, that mainly meant simple rote manufacturing. Complex physical tasks, involving manipulation and dexterity, largely remained off-limits, despite the most meticulous programming. Even as AI got infinitely more clever in the past decade—besting humans in arenas like disease diagnosis and poker tournaments—robots still lagged behind, flummoxed by the presence of any cluttered, messy, or generally disorganized environment. Or in other words, the real world.

The reason these smart machines remained so dumb? Mostly because while neural networks learned to outthink us in abstract realms, translating those brains into physical abilities is an entirely different problem. Consider the sense of touch: lacking the exquisite sensitivity we're born with, motorized hands are reduced to a completely ham-fisted version of the real thing, no matter how powerful their neural engines are.

That’s all changing.

Roboticist Matei Ciocarlie develops new ways for machines to navigate our rough and tumble environment by pioneering a new approach to tactile sensing. His smarter machines can simultaneously manipulate an unprecedented range of objects, autonomously calibrating their force as humans do to grasp as gently or forcefully as the task demands, and even assist people who’ve lost function in their own hands. Recently, he’s been tackling new environments as well; he has been building mechanical digits for NASA’s Astrobee robot—a sort of zero gravity assistant currently orbiting Earth where it’s helping out with chores on the International Space Station.

Though the tasks Astrobee is designed for can seem ordinary (taking inventory, documenting experiments, shifting cargo), the underlying technology is far from routine. Indeed, endowing a robot like Astrobee with dexterity is a perfect example of the kind of human-machine collaborations that’re only just now becoming possible.

“Robots give physical embodiment to computer programs,” Ciocarlie says. “It takes a whole different kind of intelligence to move around mass and atoms, rather than just virtual bits.”

What is the big idea animating your research?

We are driven by the idea of embodied intelligence—physical robots displaying complex motor skills in difficult environments. There are still many areas where people have to carry out dangerous or injury-prone tasks because robots lack truly general skills like manipulation or locomotion. We think such skills can be achieved by combining mechanism design, sensing, planning, and learning—in other words, building an intelligent mind into an intelligent body.

Embodied Intelligence: Building Machine V01. Learning into Robot Hands

Your work doesn’t just have real world impact—it’s also making an otherworldly impact. How did the collaboration with NASA come about?

We designed a perching gripper and built the first prototypes in our lab a few years back, then we open-sourced the design. The folks at NASA Ames spotted it and flight-certified it for their Astrobee robot. The Astrobee can use our gripper to grab onto handrails (thereby saving power when it does not have to hover around), but also for more complex skills like the “astrobatics” developed by the Spacecraft Robotics Laboratory at the Naval Postgraduate School, which are shown here in orbit and also use our gripper to hold onto support rails.

Ultimately, we published a joint paper on it. One nice thing about it was that, on our side, we had an undergraduate student, an MS student, and a PhD student all working on it. It’s not every student who can say they built something that went to space.

But we didn’t stop there—we had our eyes set on more complex manipulation. We later embarked on a four-year project sponsored by NASA’s Early Space Innovations program to research more versatile hands for robots like the Astrobee. Many students worked on it over the years, and my fellow mechanical engineer Professor Mike Massimino, a former astronaut himself, helped us throughout. There are unique challenges to creating a space robot. Such a hand must be very small to fit inside the Astrobee’s payload bay, so we couldn't afford to use many actuators or sensors. Instead, we were able to design intelligent behaviors into the mechanism of the hand and achieve versatile manipulation even with a single motor and very limited sensing.

Our hands are an incredibly complex piece of machinery—mechanically speaking they are a marvel of strength and dexterity and astounding sensitivity, with over 400 tiny touch sensors in every square centimeter. How could a machine ever compete?

Absolutely, we believe that our hands are a key part of what makes us human. This idea goes even further: in nature, intelligence and embodiment always seem to be deeply intertwined. For example, classic experiments have shown that newborn animals need to physically interact with the world in order to learn to understand it. Furthermore, in nature, the body and the mind always evolve together, optimized with and for each other, in perfect symbiosis. Going back to manipulation, there is evidence that human hands would never have gotten a chance to type intelligent thoughts on keyboards without the anatomical remodeling that started millions of years ago to improve clubbing and throwing, and that eventually led to the mechanical marvels that you mentioned.

There is evidence that human hands would never have gotten a chance to type intelligent thoughts on keyboards without the anatomical remodeling that started millions of years ago to improve clubbing and throwing.

When it comes to AI, there's been a lot of talk about advancements in neural networks and deep learning. But to create a truly smart machine, how much is that about the brains? In other words, how much of our intelligence actually resides in our physical senses?

That is a great question: we live in the age of AI, but where does AI live? Just in the brain? We don’t think so. Sensing is obviously critical: machine learning algorithms are getting better and better at extracting information from data and identifying key patterns, but if the information is not there to begin with, there is no software in the world that can help. But physical mechanisms beyond sensors can also be intelligent. If we define intelligence as the ability to react appropriately to unforeseen circumstances, we see many instances of it in robot designs. An example are soft, passively compliant robot hands that automatically match the shape of the grasped object without any computation. This is an instance of what we often refer to as “mechanical intelligence.”

While mechanical and computational intelligence are both powerful, we believe that the greatest potential is when they are developed together and complement each other. The right hardware characteristics can make the brain easier to develop, and we can make sure that we don’t pack in any hardware complexity that the brain cannot make use of. For example, there is no point in building a robot hand that has five digits and more than 20 individually articulated joints (like the human hand does) if we don’t have a clear plan for how to handle such complexity in motor control or movement planning.

What are some developments back on Earth you're particularly excited about?

We are working on the mechanical, sensorial, and computational aspects of embodied intelligence. For example, we have been looking at how people might make use of manipulating robots, as with our project developing methods for non-roboticists to intuitively teleoperate dexterous robot hands that might not be similar to the human hand, and using compact, unobtrusive sensors, such as forearm bands that can read the electrical impulses emitted by your muscles.

Returning to the human hand, we are also hoping to use robotic technology to help assist or restore manipulation capabilities to those who have lost them. In a close collaboration with the Department of Rehabilitation and Regenerative Medicine at Columbia’s Medical Center, we are developing a robotic hand orthosis for stroke patients , and testing its potential as both an assistive device that helps the wearer perform daily activities, and as a rehabilitation device that helps them recover the use of the affected hand.